Microsoft and others have helped to expedite moving the Ukrainian government’s systems to the cloud. They are offering various forms of monetary assistance, presumably with hosting, integration, migration, and especially, cybersecurity, according to this article. Russia continues to be a formidable worldwide cyber power and threat.

Abnormal Security?

This company deserves an award for their name alone. In times like this, an interesting approach to cybersecurity is to think way outside of the normal range of security group think. So, “abnormal” may be the right way to approach cybersecurity.

Azure Global Compliance Overview

This is an excellent world view of all Azure Compliance. These are the compliance global standards as summarized by nation. The PDF is here:

Cloud Compliance and Security

This “Cloud One” product offering from Trend Micro looks very promising. In this day and age of explosive cloud service growth, monitoring of cloud services for infrastructure, security and compliance is essential.

What does it do? Cloud One does the following and more:

“Run continuous scans against hundreds of industry best practice checks, including SOC2, ISO 27001, NIST, CIS, GDPR, PCI DSS, GDPR, HIPAA, AWS and Azure Well-Architected Frameworks, and CIS Microsoft Azure Foundations Security Benchmark.“

Microsoft Azure Advisor

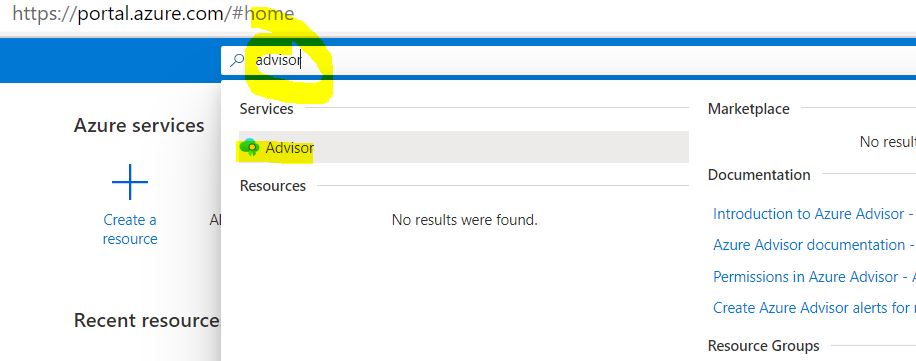

Microsoft Azure Advisor is a super useful tool to help administrators obtain pertinent recommendations for improvement of services. It is used in order to work towards obtaining best practices. Azure will occasionally prompt administrators upon log in, but to get to it manually, simply type in “advisor” on the home screen search.

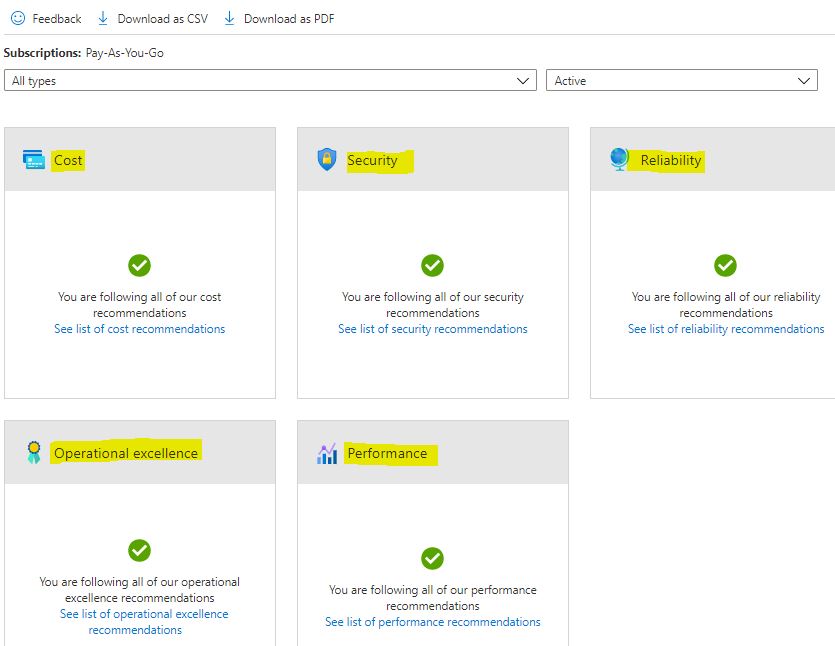

The categories focus on cost, security, reliability (aka, high availability), operational excellence, and performance. In a perfect world, we would always see all these wonderful green check marks, as below. Admittedly my ever-changing Azure account is currently limited, so the green was easy in this case. It is normally not unusual to see low, medium and high level recommendations, with descriptions of their impact on services.

Microsoft Training and Certifications Overview Poster

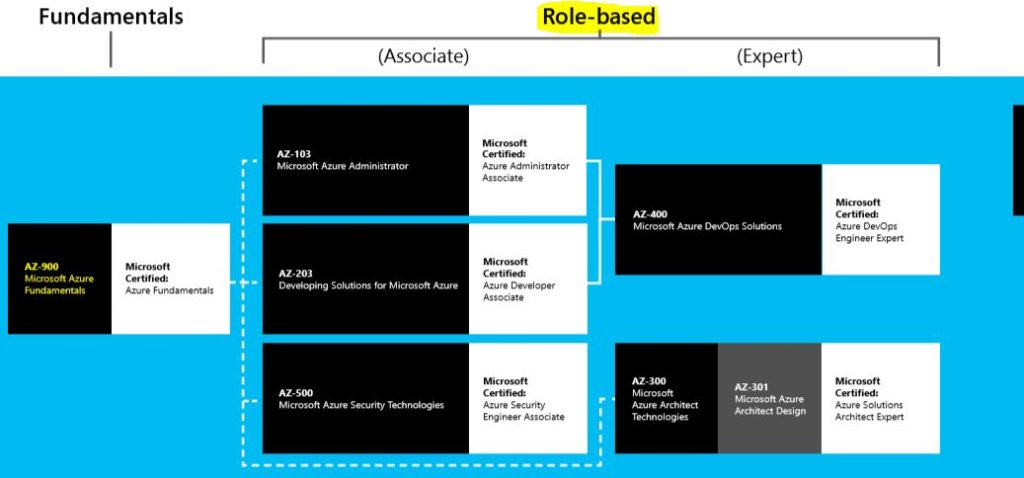

This new online Microsoft poster is an excellent overview of all the certifications available with Microsoft in 2019-2020 and beyond. In my opinion, it is really laid out nicely and I appreciate their clarity with the top level 4 categories:

Apps and Infrastructure; Data and AI; Modern Workplace; Business Application

I personally am focused on the “Apps and Infrastructure”, as that is more in line with my Systems Administration background. But honestly all four areas are very interesting. I have already completed AZ-900 and am now focusing on the Azure Administrator – the below is only a snippet of the entire poster, which lays out possible career paths for all levels of Windows IT pros.

Microsoft Certification Poster condensed URL:

Kubernetes, or K8s

I did not know about the short hand reference to Kubernetes: “K8s”. I am studying to take the Microsoft Azure Administrator certification exam and came across this little fun fact on the Microsoft “Learn” web site, which I am using to prepare. It has great modules for both conceptual and hands-on lab learning. But I must admit, K8s is a new one to me!

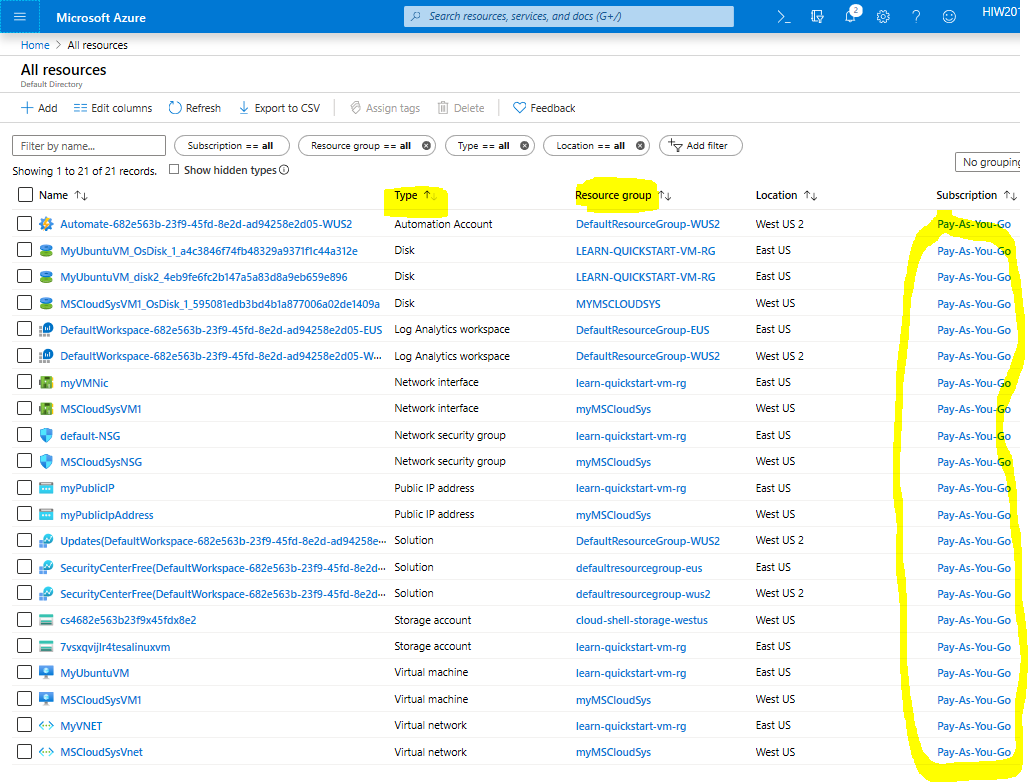

Cloud Billing and Surprise Costs

When you are studying for Azure Cloud exams and working off the Microsoft Learn website, then realize your personal Azure account is GROWING

The reality is that the bulk of these costs is covered, given that the Learn site utilizes sandboxes for on-hands learning. But there were a few situations where using a regular Azure account was required. Also, for the purposes of learning and certification exam preparation, these resources can simply be deleted.

Of course, it is always best to monitor costs. The Azure Management section provides for helpful cost analysis, budget monitoring and optimization tools.

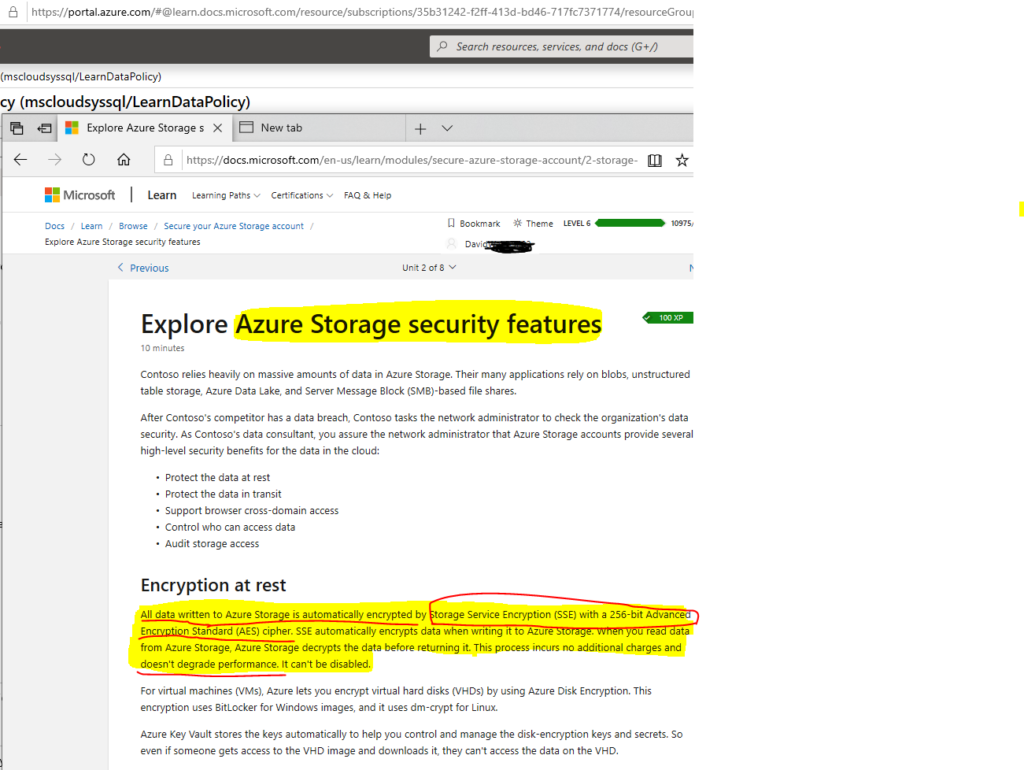

Microsoft Azure Storage Security

I am studying the Microsoft Azure Administrator modules off of the Microsoft “Learn” website. It is a great free resource to learn some of the hottest and most relevant modern Cloud technologies. This one particular area piqued my interest: data storage security. I know that many businesses and various leaders are pessimistic about the protection of their Cloud data. It makes sense. Why would any leader not think about the way in which their organization’s data is stored in the Cloud? To many leaders, the notion of their valuable data being moved to and handled in the Cloud does not necessarily make them feel warm and fuzzy [as we may see in the commercials ;> ]. Instead they have a healthy cynicism of their data handling. I agree with the healthy cynicism.

But Microsoft Azure has many ways in which to secure data. These include, but are not limited to, proper network security rules to block out most or all traffic; access control lists; strict internal roles based access; and good old-fashioned data encryption.

Azure automatically encrypts all data as it is stored or written to the cloud, i.e. is stored “at rest” [meaning, it is sitting on the disk, so to speak]. Any file that is written to Azure storage is encrypted with Storage Service Encryption (SSE). It is 256-bit AES encryption. This is very powerful encryption and is an industry standard. My favorite part of the SSE is that this encryption of the data that gets stored to disk does NOT affect performance. So, there is no degradation whatsoever to services. Encryption involves scrambling of bits and bytes and generally takes some resources, but Microsoft accomplishes this with no hit to resources.

Of course, in addition to the SSE security, the actual virtual disks themselves, if applicable, can be encrypted as well with ‘BitLocker’ for Windows or ‘dm-crypt’ for Linux . But I wanted to focus only on the Storage Security Encryption at this point. And this SSE should help any leader breathe a sigh of relief when thinking about their data security.

Microsoft Learn can be reached here

Add Azure VM with Cloud Shell

I created a page with a simple guide on how to add a virtual machine to Microsoft Azure. This, however, is not instruction on doing this from within the Azure Portal. The VM is added by using the cloud shell.

Read the new VM via cloud shell instructions here.